Projects

Perturbation Learning Based Anomaly Detection

Project description/goals

Anomaly detection (AD) is an important research problem in many areas such as computer vision. AD aims to identify abnormal data from normal data and is usually an unsupervised learning task because the anomaly samples are unknown in the training stage.

Importance/impact, challenges/pain points

Classical AD methods such as OCSVM [Schölkopf et al., 2001] and DSVDD [Ruff et al., 2018] require specific assumptions (e.g. hypersphere) for the distribution or structure of the normal data. The GAN-based approaches [Deecke et al., 2018; Perera et al., 2019] suffer from the instability problem of min-max optimization and have high computational costs.

Solution description

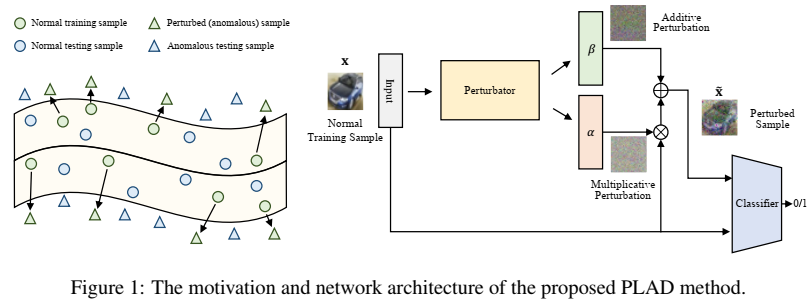

We propose a novel AD method called perturbation learning based anomaly detection (PLAD). PLAD aims to learn a perturbator and a classifier from the normal training data. The perturbator uses minimum effort to perturb the normal data to abnormal data while the classifier is able to classify the normal data and perturbed data into two classes correctly.

Key contribution/commercial implication

Compared with the state-of-the-art of anomaly detection, our method does not require any assumption about the shape (e.g. hypersphere) of the decision boundary and has fewer hyper-parameters to determine. Empirical studies on benchmark datasets verify the effectiveness and superiority of our method.

Next steps

Adapt the method to graph data and time series. Provide more theoretical analysis.

Team/contributors

Jinyu Cai (Fuzhou University), Visiting Student at SRIBD

Jicong Fan, SRIBD & CUHK-Shenzhen